AI on Insecure Web Infrastructure: Security Checklist

AI on insecure web infrastructure creates risk faster than most teams expect. However, many organizations are still bolting AI features onto aging web apps, with weak permissions, unclear data flows, and third-party dependencies they barely govern.

That is the real problem.

Most companies are not building clean, secure, well-governed AI systems from the ground up. Instead, they are layering AI onto fragile foundations. As a result, what looks like innovation on the surface often becomes risk multiplication beneath the surface.

Therefore, the most important AI conversation is not really about prompts, model sizes, or whichever vendor has the best demo. Rather, it is about whether the stack below the AI is mature enough to support it safely.

AI does not magically modernize weak systems. Instead, it exposes them, accelerates them, and sometimes scales their flaws faster than anyone expected.

Why AI on Insecure Web Infrastructure Is So Risky

Many organizations already struggle to keep their existing web stack under control. For example, they may have outdated plugins, legacy integrations, weak role separation, inconsistent logging, or shared service accounts that nobody wants to touch.

Nevertheless, those same organizations are now under pressure to add AI chat, AI search, AI assistants, AI summaries, AI workflows, or AI-enhanced analytics.

At first, that sounds exciting. However, when AI is layered onto weak infrastructure, several problems appear almost immediately.

- First, the AI system often gets access to more data than it should.

- Second, the organization may not know exactly what data is being sent to third parties.

- Third, prompts and outputs can become new channels for abuse, leakage, and manipulation.

- Finally, teams often discover too late that their monitoring, incident response, and rollback procedures were never designed for AI-driven behavior.

Consequently, the issue is rarely AI by itself. Instead, the issue is that AI amplifies the weaknesses that were already present in the environment.

A fragile application does not become modern just because an AI feature is attached to it. If anything, it becomes harder to defend.

What Leaders Often Miss

A lot of AI discussions focus on productivity. That makes sense. Faster workflows, better automation, and smarter search are all attractive outcomes.

However, the infrastructure conversation usually lags.

For example, a company may ask:

- Can we add AI to the customer portal?

- Can we summarize tickets automatically?

- Can we help employees search internal documentation faster?

- Can we embed AI into product selection, support, or analytics?

Those are reasonable questions. Yet the better question is this:

What exactly will this AI system touch, trust, expose, log, and depend on?

That single question changes the entire conversation.

Instead of thinking only about features, you start thinking about identity boundaries, internal and external data paths, third-party service dependencies, sensitive content exposure, abuse scenarios, recovery plans, and long-term operating costs.

As a result, the organizations that win with AI will not necessarily be the ones that moved first. Rather, they will be the ones who adopted it responsibly, alongside systems they could actually secure and manage.

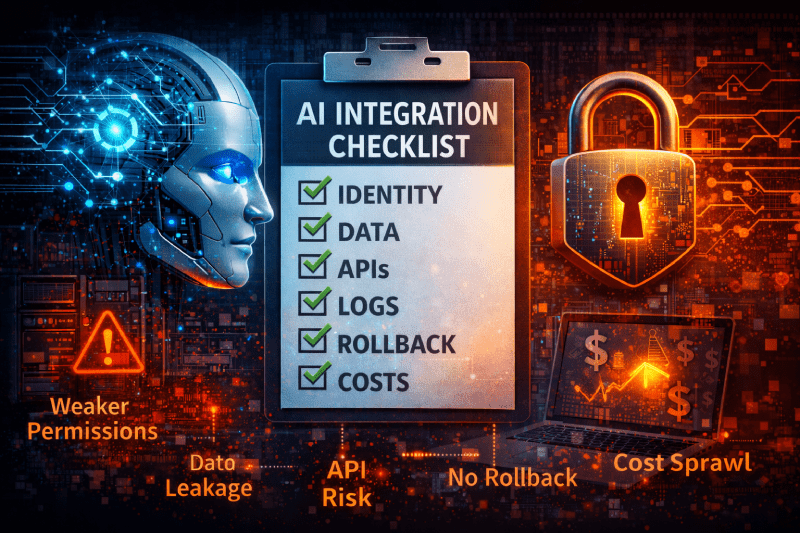

Security Checklist for AI on Insecure Web Infrastructure

If I had to review an AI-enabled web system in the real world, this is where I would start.

1. Start With Identity and Least Privilege

Before anything else, I want to know who or what can access the AI feature and what permissions it inherits.

- Is the AI process using a broad service account?

- Does it have access to internal systems it does not truly need?

- Are roles separated cleanly between users, administrators, developers, and automation?

- Can the AI feature pull data across boundaries that a normal user could not?

This matters because AI integrations often become permission multipliers. In other words, a system with excessive privilege can expose far more than a normal front-end bug ever would.

Therefore, if identity is sloppy, I would stop there and fix that first.

2. Map Data Exposure Clearly

Next, I want a simple answer to this question:

What data goes in, where does it go, who can see it, and where is it stored?

Surprisingly often, teams cannot answer that cleanly.

They know the application calls an AI API. However, they may not know whether prompts are logged, whether outputs are retained, whether debugging captures sensitive content, or whether data moves through systems outside the intended trust boundary.

As a result, organizations risk exposing customer data, employee data, internal documentation, credentials, secrets, or other regulated and proprietary information.

Accordingly, every AI rollout should begin with a basic data flow review. If the team cannot diagram the path, they do not yet control the risk.

3. Treat Third-Party and API Risk as Core Risk

AI features often depend on external vendors, APIs, plugins, or connectors. Meanwhile, many companies still treat those dependencies like simple add-ons.

That is a mistake.

- What happens if the service changes behavior?

- What happens if pricing changes suddenly?

- What happens if the provider has an outage?

- What happens if the provider stores data differently than expected?

- What happens if an integration token is compromised?

In practice, the AI layer is rarely independent. Instead, it usually sits atop an ecosystem of vendors and services. Consequently, a third-party review is not optional. It is part of the core design.

4. Make Logging, Monitoring, and Observability Real

Many teams can deploy features faster than they can observe them. Unfortunately, AI makes that weakness more dangerous.

If an AI-enabled system behaves unexpectedly, you need to know:

- what prompt triggered the action

- what system call or data access followed

- which user initiated it

- which downstream services were involved

- whether the result was blocked, logged, or completed

Without strong observability, security teams are effectively blind. Worse, when something goes wrong, engineering teams may not be able to reproduce the issue cleanly.

Therefore, before shipping AI into production, I would confirm that logs, alerts, and review paths exist for both normal use and abuse scenarios.

5. Add Controls for Prompt Abuse and Input Manipulation

AI features create new input surfaces. That means old security habits are not enough by themselves.

It is no longer just about validating form fields or filtering file uploads. Now you also have to consider:

- prompt injection

- malicious document content

- manipulated user input

- attempts to bypass policy

- indirect instructions hidden in connected data sources

Moreover, the more connected the AI feature becomes, the more dangerous untrusted input becomes.

For that reason, teams should define clear controls around what the AI can act on, what it can retrieve, what it can trigger, and what must always require explicit human approval.

If the model can take actions, the bar should be even higher.

6. Build Rollback and Change Management Before Launch

This is where mature engineering matters.

If the AI feature misbehaves in production, can you deactivate it quickly? Can you revert to a safe mode? Can you isolate the impacted workflow without taking down the entire application?

Too many organizations launch AI features as if they are harmless enhancements. However, once the feature affects customer output, internal operations, search results, support workflows, or automated decisions, the blast radius can grow quickly.

- a kill switch

- a fallback mode

- scoped release controls

- documented rollback procedures

- clear ownership across engineering, IT, and security

Ultimately, resilience is part of security.

7. Treat Cost Controls as Security Controls Too

This point is often overlooked. Nevertheless, it matters.

AI consumption can become expensive very quickly. If usage is poorly controlled, the organization may face runaway API costs, wasteful token consumption, or abusive automation patterns that increase both expense and exposure.

That is not just a finance problem. It is also a governance problem.

- Who approves high-volume usage?

- What usage thresholds trigger review?

- Are there per-user or per-feature limits?

- Can unnecessary requests be cached or reduced?

- Is the business value actually measurable?

In a mature environment, cost discipline and security discipline reinforce each other. Both require visibility, limits, accountability, and ownership.

What Good AI Adoption Actually Looks Like

In my view, secure AI adoption should look boring in the best possible way.

- clean identity boundaries

- deliberate data access rules

- documented third-party decisions

- meaningful logging

- layered safeguards

- reversible deployments

- measured costs

- named owners

That may not sound flashy. However, that is exactly the point.

The companies that get real value from AI will probably not be the ones with the loudest marketing. Instead, they will be the ones who quietly built systems that are secure, observable, governable, and sustainable over time.

That is what separates a real capability from a risky experiment.

Quick Recap for Busy Leaders

Before you ship AI, review these seven areas:

- identity and least privilege

- data exposure and storage paths

- third-party and API risk

- logging and observability

- prompt abuse controls

- rollback and change management

- cost limits and ownership

That checklist is not glamorous. However, it is practical. More importantly, it is what keeps AI from becoming a force multiplier for existing weaknesses.

Final Thoughts on AI on Insecure Web Infrastructure

Everyone wants AI. Very few teams are asking whether the infrastructure underneath it deserves that trust.

That is the conversation more organizations need to have.

Because AI on insecure web infrastructure is not a modernization strategy. Instead, it is often a force multiplier for weak architecture, unclear ownership, and unmanaged risk.

So, before shipping AI, secure the stack beneath it.

That is where the real work starts.

If you need security, infrastructure, or consulting support, fill out a service request and I will get back to you within one business day.

Want to read more about AI? See my previous article: AI Is Destroying the Internet: Why the Web Feels Dead